How voters review and verify ballots

How voters review and verify ballots

This is a report of qualitative research to gain deeper insights about how voters mark, review, verify, and cast their ballots. It was conducted as part of the work to update the human factors— accessibility, usability, and voter privacy—requirements in federal voting system standards (VVSG) and fill gaps in our understanding of how voters interact with ballot marking devices.

This research was published by the National Institute of Standards and Technology (NIST) as NIST GCR 24-0511, also available on NIST’s website.

How voters review and verify ballots (NIST GCR 24-051)

Download report (PDF)Key findings

Each participant voted with the same ballot on paper and on a ballot marking device (a total of 70 voting sessions). Some big themes emerged about the influences that shape attitudes and behaviors about voting:

Past voting experiences shape expectations for the voting process

A voter’s “mental model” of the process is heavily influenced by their local election history and familiarity with technology.

- Confusion over the BMD printout: Voters accustomed to hand-marking often struggled to understand the purpose of a BMD printout, sometimes mistaking it for a receipt rather than the official ballot to be cast.

- Demographic influences: Participants with higher accessibility needs and lower education levels were surprisingly more likely to verify their ballots and review their selections carefully compared to other groups.

Voters preferred ballot marking devices

Over two-thirds of participants (25/35) preferred BMDs over hand-marked ballots for their ease of use and error correction.

- Voter confidence: BMDs increased voter confidence by making it easy to identify and fix mistakes.

- Error correction: Voters were significantly more successful at fixing errors on BMDs. Of 16 voters who made errors on a BMD, 11 fixed them; of 11 who made errors on a hand-marked ballot, only 1 fixed it.

Voters did not have strong habits for verifying printed ballots

Voters generally did not feel the need to verify the final printed output of a BMD.

- Assumption of accuracy: Most users trusted that the printer would accurately reflect their on-screen choices, comparing it to the reliability of a home printer.

- The “final” screen fallacy: BMD instructions often emphasized checking the screen before printing, which inadvertently signaled to voters that the digital review was the final step.

The use of ballot marking devices alone did not encourage verification of printed ballots

The use of technology alone did not prompt voters to check the printed paper for accuracy.

- Misunderstanding the process: Less than half of the participants realized that the printed paper was the actual vote to be counted. Many assumed their vote was already recorded “in the cloud” or on the machine itself.

- Need for better guidance: The study suggests that without clear education, polling place signage, and explicit on-screen instructions, voters have little motivation to verify their printed ballots.

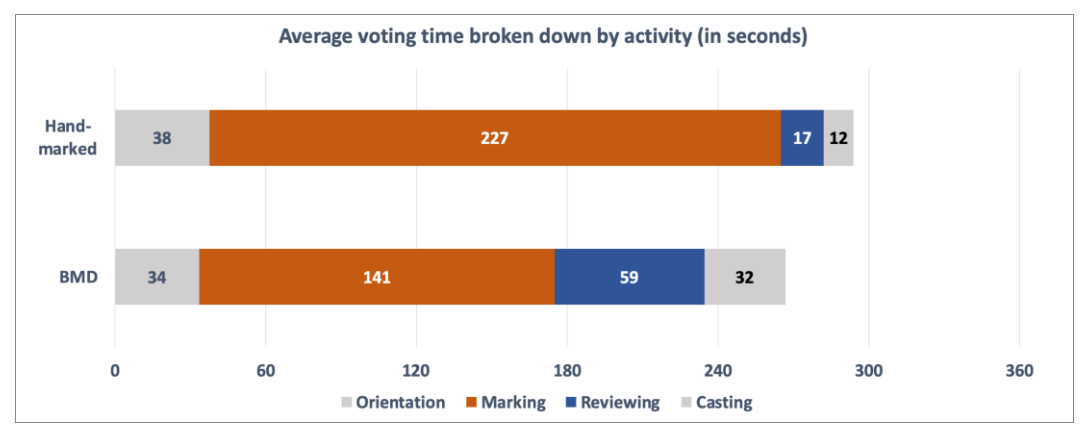

Voters marked, verified, and cast their ballots faster on a BMD

Efficiency varied significantly based on the voter’s intent and the system used.

Range of time: While the fastest voters finished in barely a minute (focusing only on specific contests), the BMD’s structured review screens allowed for a more streamlined verification process than manual page-by-page review.

Speed of interaction: The electronic interface allowed for quicker navigation through contests compared to the manual process of reading and marking a paper ballot.

Average voting times by activity

| Total | Orient | Mark | Review | Verify & Cast | |

| Hand marked | 294 sec | 38 sec | 227 sec | 17 sec | 12 sec |

| BMD | 266 sec | 34 sec | 141 sec | 59 sec | 32 sec |

About the research

This research was conducted by Suzanne Chapman, Lynn Baumeister, and Whitney Quesenbery.

This was a qualitative study, focused on observing participants as they voted. Each participant voted twice, first using a hand-marked paper ballot and then one of three ballot marking devices (BMDs). After each of the voting sessions, we interviewed them about their experience allowing us to confirm observations and clarify their intent. 35 voters marked both a pre-printed paper ballot and a ballot marking device, for a total of 70 voting sessions.

Related resources

Visit our page on voting systems to find more resources about the usability and accessibility of voting systems.